With GLM-5.1, Zhipu AI is introducing a new open-weight model aimed at long-running, agent-based programming tasks. The company’s core argument is that existing models, including its own predecessor GLM-5, tend to exhaust their repertoire too quickly on complex problems. They apply familiar solution strategies, make some early progress, and then stall. Simply giving them more compute time, Zhipu argues, does not solve that limitation.

According to Zhipu AI, GLM-5.1 addresses this by repeatedly reviewing its own strategy, identifying dead ends, and trying new approaches. The company describes the process as optimization across “hundreds of rounds and thousands of tool calls.”

So far, however, Zhipu AI has demonstrated this through three scenarios conducted by the company itself. Independent evaluations have not yet been published.

Model reportedly changes its own strategy

In the first scenario, GLM-5.1 was tasked with optimizing a vector database - a system used to search large datasets and retrieve similar entries. The goal was to maximize the number of search queries answered per second without sacrificing accuracy. In a standard 50-run benchmark, Claude Opus 4.6 had previously achieved what Zhipu says was the best result: 3,547 queries per second.

Zhipu gave GLM-5.1 unlimited attempts instead. The model decided for itself when to submit a new version and what to try next. After more than 600 iterations and over 6,000 tool calls, it reportedly reached 21,500 queries per second - about six times the previous best score.

According to Zhipu, the model repeatedly made major strategic shifts during the run. Around iteration 90, for example, it moved from a full search across all data to a more efficient clustering approach. Around iteration 240, it introduced a two-stage pipeline that first performs a coarse filter and then a more precise reranking step. Zhipu says it identified six such structural shifts over the full run, each initiated by the model itself.

GPU optimization shows progress, but not the lead

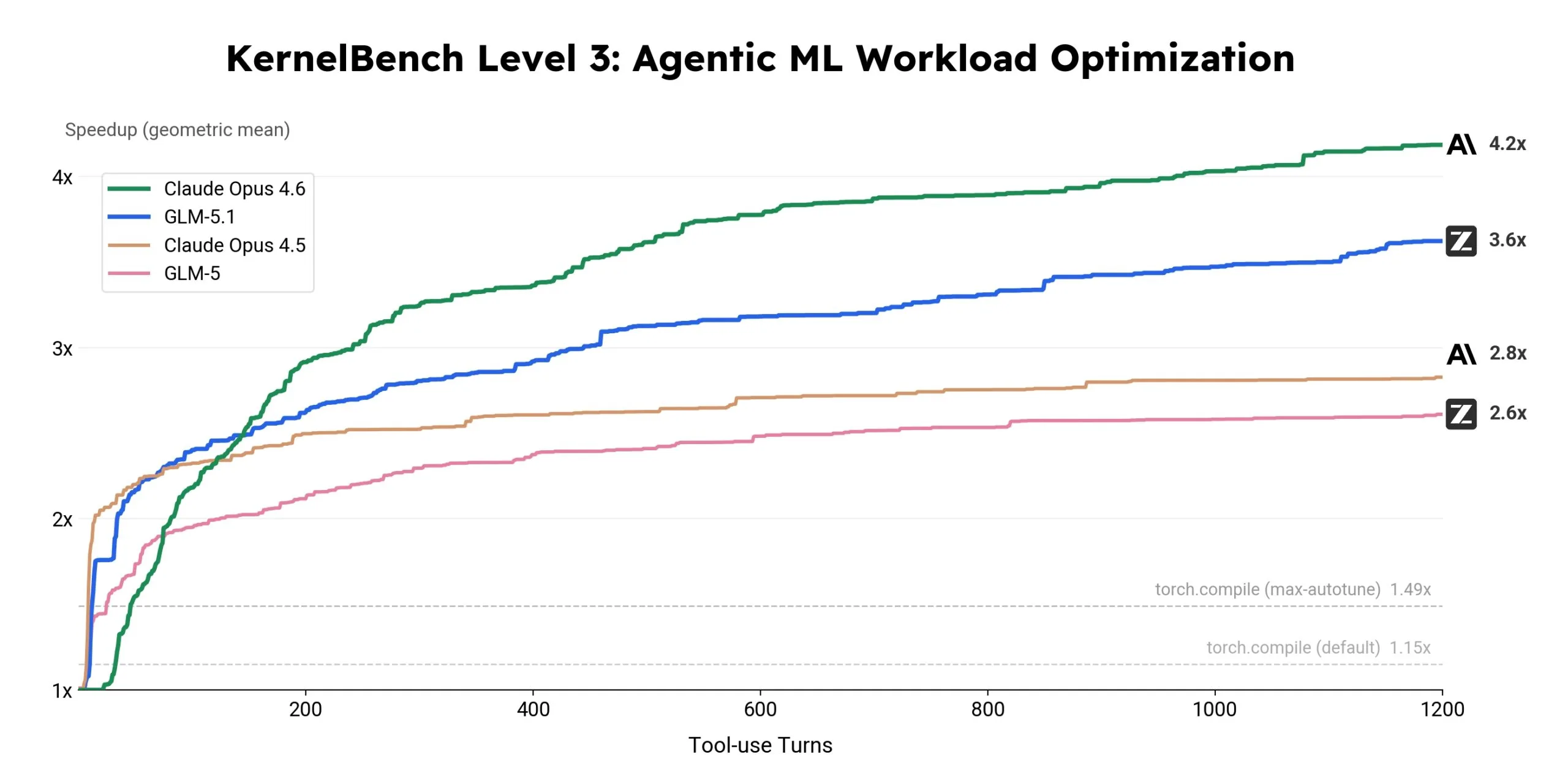

In the second scenario, the model was asked to rewrite existing machine-learning code so it would run faster on GPUs. Here, GLM-5.1 reportedly achieved a 3.6x speedup over the baseline implementation and continued improving even in later stages of the process. GLM-5, by contrast, plateaued much earlier.

Claude Opus 4.6 remains clearly ahead in this benchmark with a 4.2x speedup and still appears to have headroom at the end. GLM-5.1 broadens the productive horizon relative to its predecessor, but it does not close the gap to the strongest competing model.

A Linux desktop built from a single prompt

The third scenario is the most unusual. GLM-5.1 was asked to build a complete Linux desktop environment as a web application, without starter code or intermediate instructions. According to Zhipu, most models produce a basic shell with a taskbar and a few placeholder windows, then effectively stop there.

GLM-5.1 was placed in a loop where, after each round, it reviewed its own output and decided what was still missing or needed improvement. After eight hours, Zhipu says, the model produced a functional desktop environment with a file browser, terminal, text editor, system monitor, calculator, and games.

Strong in coding, weaker in reasoning

Beyond those three demos, Zhipu AI also released a benchmark table that paints a more mixed picture. In coding, GLM-5.1 performs at or near the top in several tests. On SWE-Bench Pro, a software engineering benchmark, it reportedly scores 58.4 percent - the highest result among the freely available tested models, narrowly ahead of GPT-5.4 at 57.7 percent and Claude Opus 4.6 at 57.3 percent. On CyberGym, a cybersecurity benchmark, it posts the top score at 68.7. Zhipu does note, however, that Gemini 3.1 Pro and GPT-5.4 refused some tasks for safety reasons, which may have depressed their results.

On Humanity’s Last Exam, a broad knowledge benchmark, GLM-5.1 scores 31 percent, well behind Gemini 3.1 Pro at 45 and GPT-5.4 at 39.8. On GPQA-Diamond, which tests scientific reasoning, the model scores 86.2, again trailing Gemini 3.1 Pro at 94.3 and GPT-5.4 at 92.

Results are similarly mixed on agentic tasks. In Vending Bench 2, where a model must run a simulated vending-machine business, GLM-5.1 ends with a balance of $5,634. Claude Opus 4.6 reaches $8,018 - a substantial lead. In repository generation on NL2Repo, Claude Opus 4.6 also leads clearly with 49.8 versus 42.7 for GLM-5.1.

In the Artificial Analysis Intelligence Index, the model currently ranks just behind Anthropic’s Claude 4.6 Sonnet.

Zhipu AI itself acknowledges several open challenges. The model still needs to learn to recognize dead ends earlier, maintain coherence across thousands of tool calls, and assess itself more reliably on tasks without clear success metrics. The company describes GLM-5.1 as a “first step” in that direction.

The model is available under the MIT license on Hugging Face and ModelScope, and can also be accessed through the API platforms api.z.ai and BigModel.cn. It can be integrated into coding agents such as Claude Code and OpenClaw. For local deployment, Zhipu supports the inference frameworks vLLM and SGLang, with setup instructions provided in its GitHub repository. GLM-5.1 is also expected to become available through the Z.ai chat interface in the coming days.

Zhipu AI is expanding its model lineup quickly

Zhipu AI recently introduced GLM-5V-Turbo, a multimodal coding model that can generate code directly from images and video. Before that, in February, the company released GLM-5, a 744-billion-parameter open-weight model intended to compete with leading proprietary systems on coding tasks. GLM-5.1 appears to build on those earlier releases and adds long-horizon capabilities that Zhipu hopes will differentiate it from Chinese rivals.

Competition among Chinese AI labs remains intense. Alongside Zhipu AI, companies such as Moonshot AI with Kimi K2.5 and Alibaba with Qwen3.5 are also pushing into the market for autonomous coding agents.

Zhipu AI is not alone in betting on persistent AI agents. In early 2026, Cursor reportedly had hundreds of GPT-5.2 agents work for a week on building a web browser. The resulting Rust codebase, which exceeded three million lines, was later described by the Software Improvement Group as barely maintainable and ranked among the bottom five percent of evaluated software systems.

ES

ES  EN

EN