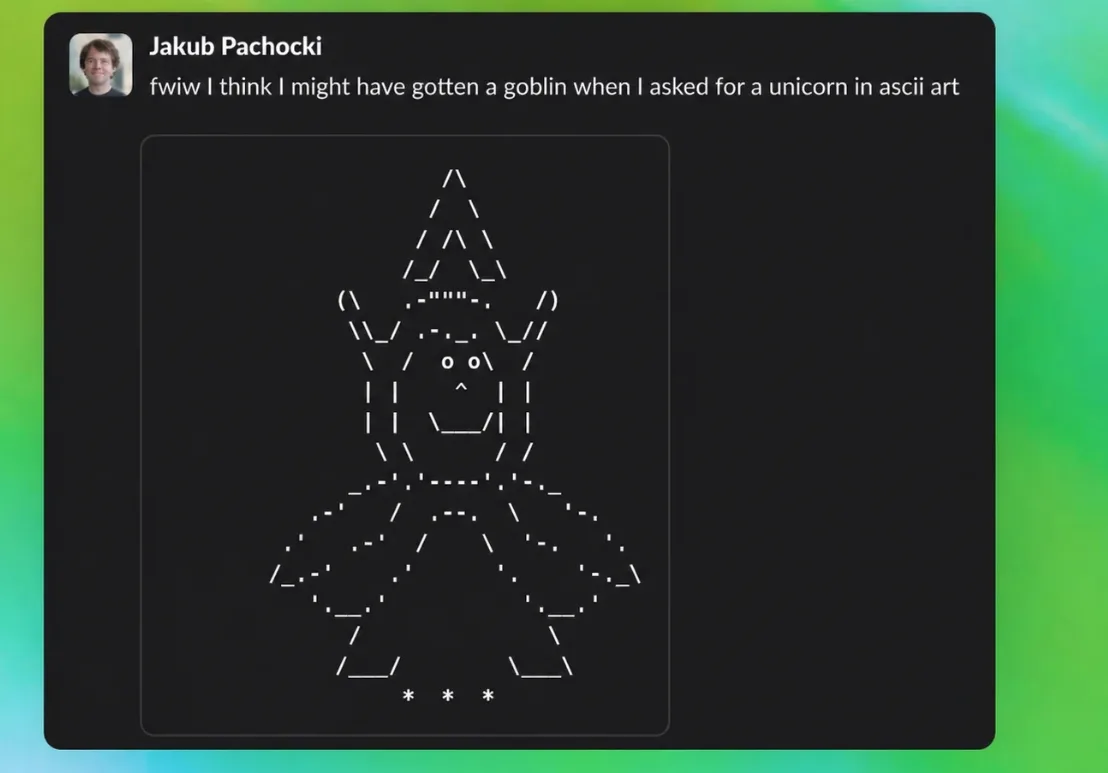

According to OpenAI, the cause lay in the training of ChatGPT's "Nerdy" personality — a feature for adjusting communication style. A reward signal telling the model which responses were good accidentally favored creature-based metaphors. Although the "Nerdy" personality accounted for only 2.5% of all responses, it was responsible for 66.7% of all goblin mentions. Through a feedback loop in training, the quirk spread to other modes as well. OpenAI disabled the "Nerdy" personality in March, removed the faulty reward signal, and filtered training data containing creature-related terms.

GPT-5.5 nevertheless exhibited the same problem, because its training had already begun before the root cause was identified. OpenAI was therefore forced to add a special instruction to Codex, its coding tool, to suppress goblin metaphors:

Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user's query.

According to OpenAI, the case illustrates how small training incentives can produce unexpected behaviors in AI models.

ES

ES  EN

EN