For comparison, GPT-5.2 Instant sometimes produced responses that felt overly cautious or overly instructive when dealing with sensitive topics. The updated GPT-5.3 Instant model now uses such moralizing disclaimers far less frequently.

When working with information from the internet, GPT-5.3 Instant generates more structured and meaningful answers. The model is better at recognizing the context and implied meaning of a request while cross-checking retrieved information with its internal knowledge and reasoning.

Overall, the conversational style of the new model has become more natural. Unnecessary phrases such as “Stop. Take a breath.” have largely disappeared. The tool also shows fewer hallucinations and performs better in writing tasks. However, OpenAI warned that in some languages the responses may still sound overly literal.

GPT-5.3 Instant is already available to all ChatGPT users and to developers through the API. Support for GPT-5.2 Instant will continue until June 3, 2026.

GitHub alternative

According to several media reports, OpenAI is also developing a potential alternative to GitHub. The project is still at an early stage, but a strategic decision to move forward has reportedly already been made.

The service is expected to operate on a paid subscription model, although journalists have not yet disclosed additional details.

One possible motivation behind the project is the recurring outages on GitHub that have reportedly caused difficulties for OpenAI engineers.

If OpenAI launches its own code hosting platform, it would become a direct competitor to its major investor Microsoft, which owns GitHub.

At the end of February, OpenAI raised $110 billion in funding at a valuation of $730 billion, making the round one of the largest startup investments in history.

Updated Gemini

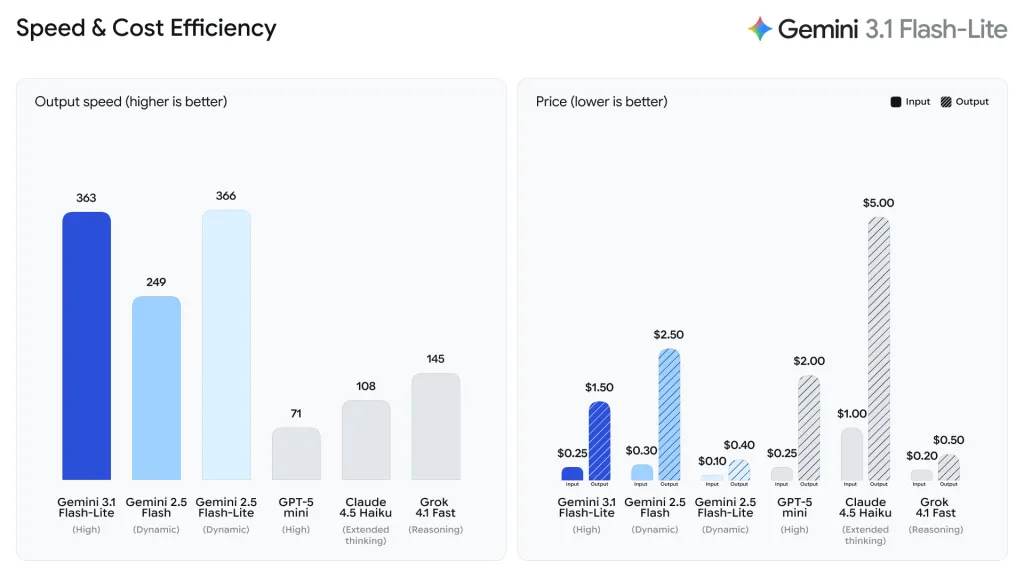

Meanwhile, Google has released a preview version of Gemini 3.1 Flash-Lite, described as the most cost-efficient and fastest model in the Gemini 3 family.

The cost of using the model is $0.25 per million input tokens and $1.5 per million output tokens.

The model is optimized for building AI agents and scaling applications. It can handle tasks such as translating large volumes of data, moderating content, and generating user interfaces.

According to independent researchers at Artificial Analysis, the new model processes information approximately 2.5 times faster than Gemini Flash 2.5.

Gemini 3.1 Flash-Lite is currently available in preview for developers through the Gemini API and Google AI Studio, and for businesses via Vertex AI.

Earlier in February, Google also introduced Gemini 3.1 Pro — an upgraded AI model that set new benchmark records.

ES

ES  EN

EN