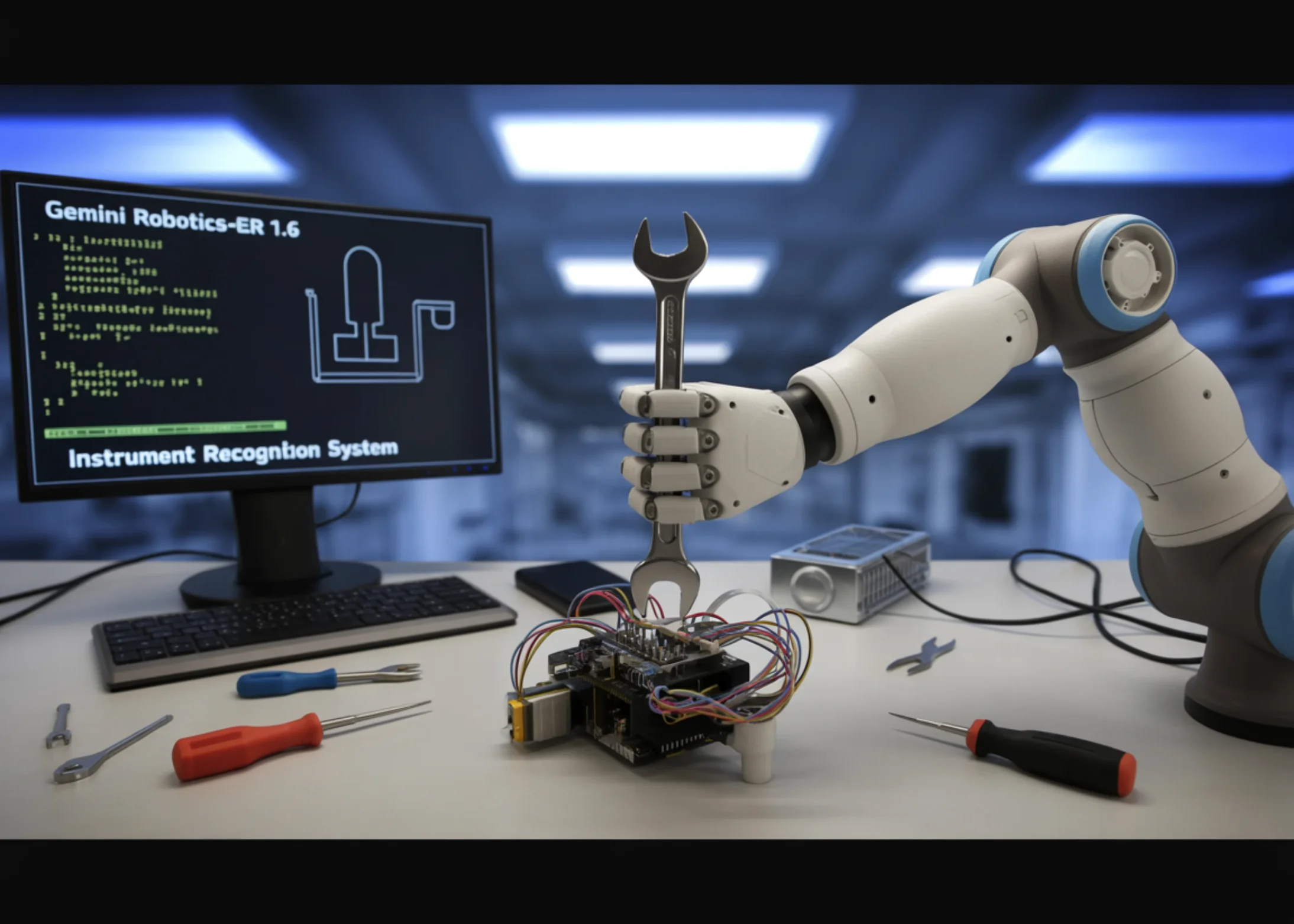

The model acts as a higher-level reasoning layer for robots. It can analyze the surrounding environment, break tasks into logical steps, and call tools such as Google Search or vision-language-action models to complete more complex workflows.

According to Google DeepMind, Gemini Robotics-ER 1.6 outperforms both Gemini Robotics-ER 1.5 and Gemini 3.0 Flash on several embodied reasoning tasks, including pointing to objects, counting items, and detecting whether a task was completed successfully.

One of the most notable improvements is instrument reading. Google says the new model is significantly better at interpreting gauges, pressure dials, sight glasses, and similar industrial indicators. This capability was developed in collaboration with Boston Dynamics, whose robot Spot uses it for facility inspection workflows.

To achieve higher accuracy, the model combines agentic vision with code execution. In practice, it first zooms into an image to inspect small visual details, then uses pointing functions and code to calculate proportions and scale intervals, and finally applies world knowledge to interpret what the measured value actually means in context.

Gemini Robotics-ER 1.6 is available now through the Gemini API and Google AI Studio. Google has also released a developer Colab example to help teams configure the model and start testing embodied reasoning workflows.

ES

ES  EN

EN