The product is a hosted service built on the Claude platform that allows developers to manage long-running agents through a set of core interfaces.

The system is designed to carry out tasks over extended periods, use tools, run code, edit files, interact with external services, and continue operating even after failures.

The problem

Until now, developers launching AI agents have faced several recurring issues:

-

digital assistants would lose context, forcing resets;

-

models would end tasks too early, requiring custom workarounds;

-

neural networks struggled with long-running assignments.

For example, Claude Sonnet 4.5 could prematurely stop working on a task as it approached the limits of its context window.

The solution

The cloud-based Claude Managed Agents service is designed to handle background processes automatically, including memory management and error recovery.

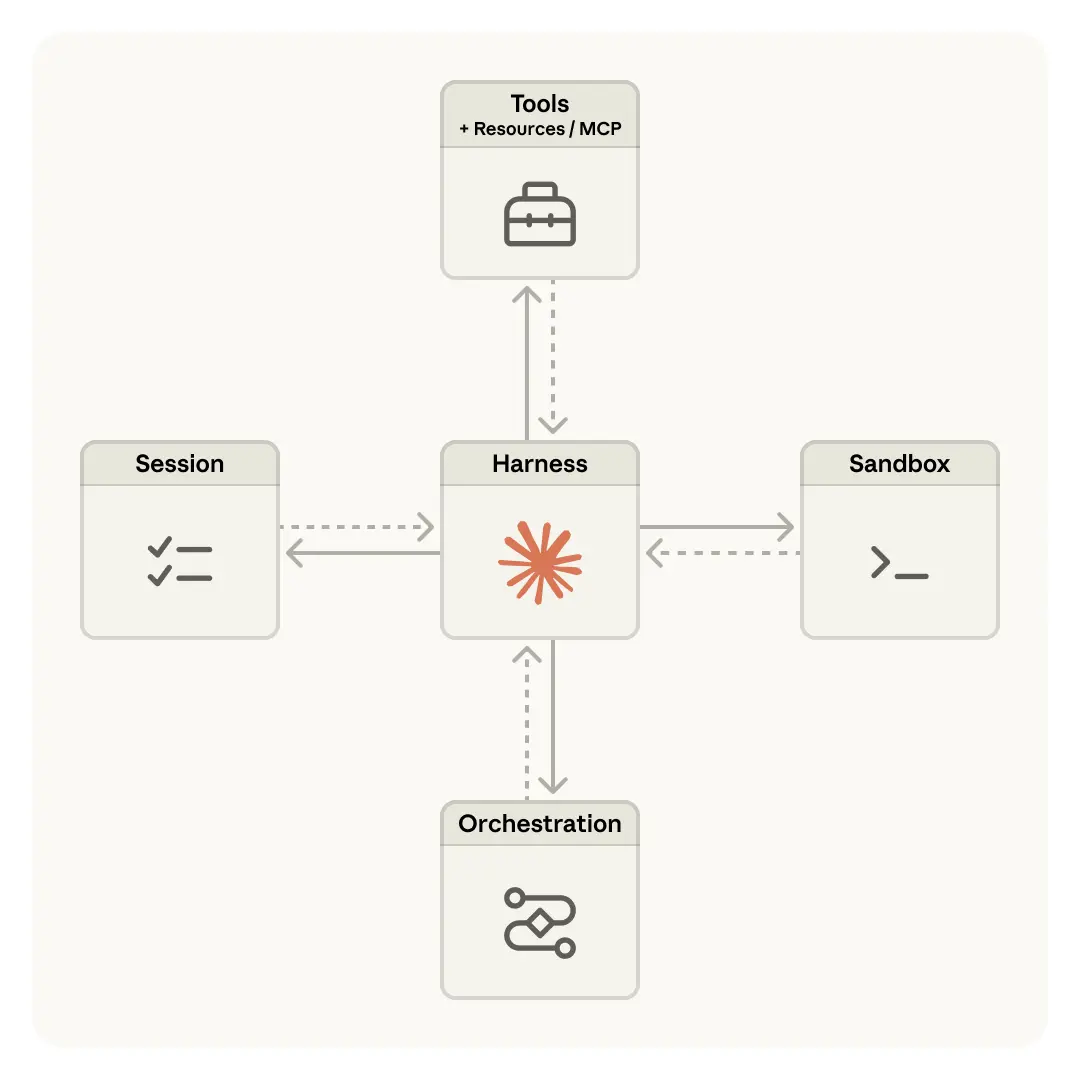

The central architectural idea is to split the agent into independent components. Previously, everything was handled in one place:

-

the model itself and the logic for calling it;

-

code execution;

-

memory and session state;

-

permissions and tokens.

Anthropic has now separated the system into three main layers:

-

Session - a log of all actions and events. It acts as the agent’s long-term memory, recording step history and helping bypass context-length limitations.

-

Control logic - the “conductor” that calls Claude, decides what context to send to the model, and manages the execution loop.

-

Execution environment - the layer where the LLM can run code, edit files, and interact with its surrounding environment.

This structure allows each part to operate independently, meaning that a local failure in one process no longer causes the entire session to shut down.

Anthropic is positioning Claude Managed Agents as a durable infrastructure layer that can outlast the evolution of individual models. In effect, it is presenting the system as something like an operating system for AI agents.

ES

ES  EN

EN