Both are now available as a public preview through the paid tiers of the Gemini API and are aimed at developers who want to automate large-scale research tasks. With a single API call, developers can launch research workflows that, for the first time, combine the open web with proprietary data streams and deliver fully source-grounded analyses.

Two versions for different use cases

The standard Deep Research agent replaces the preview version released in December. Google says it offers higher quality while reducing both latency and cost. It is designed for applications where speed matters and users expect an immediate response, such as chat interfaces.

Deep Research Max, by contrast, is optimized for maximum thoroughness. The agent uses extended test-time compute to reason, search, and refine its final report iteratively. Google describes a typical use case as asynchronous background workflows, such as an overnight cron job that generates comprehensive due diligence reports for an analyst team by the next morning.

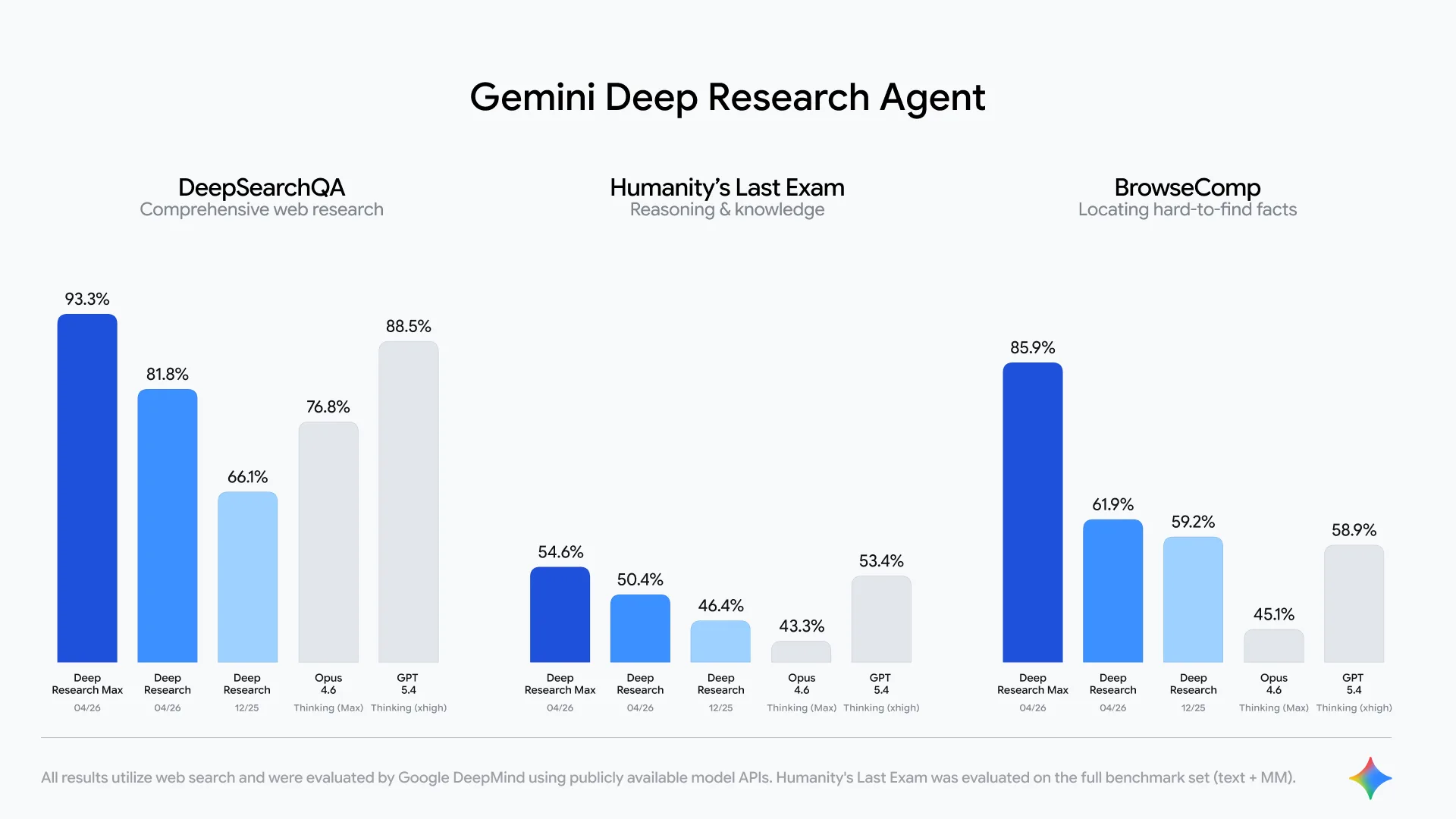

According to Google’s internal benchmarks, Deep Research Max shows a clear performance jump on retrieval and reasoning tasks. Compared with the previous version, the agent reportedly consults more sources and identifies critical nuances that the older system often missed.

The comparison with OpenAI’s GPT-5.4 is not entirely straightforward. While GPT-5.4 offers strong autonomous web search, it is not specifically optimized for deep research workflows. OpenAI provides a separate deep research agent for that use case, which after the February update is based on GPT-5.2 rather than GPT-5.4. OpenAI’s strongest search-focused model is GPT-5.4 Pro, which Google apparently did not include in its comparison. According to OpenAI, GPT-5.4 Pro scores up to 89.3 percent on the agentic search benchmark BrowseComp, while GPT-5.4 reaches 82.7 percent.

Anthropic has also reported higher BrowseComp results for Opus 4.6 than the figures shown by Google, namely 84 percent. According to Anthropic, the model achieved that score without reasoning, because it performed better in that setting than with high reasoning intensity. The discrepancy likely comes down to the testing setup: whether the models were evaluated through the raw API or within the AI labs’ own research tools. For that reason, Google’s presentation should be read with caution, even if it is not necessarily wrong. At a minimum, the comparison lacks transparency.

MCP support opens the agent to proprietary data

One of the most important additions is support for the Model Context Protocol (MCP). This allows developers to connect Deep Research to their own data sources and specialized professional data streams, such as financial or market data providers. By supporting arbitrary tool definitions, Google says the agent evolves from a pure web searcher into an autonomous system that can also investigate specialized databases.

For the first time in the Gemini API, the agent can also generate charts and infographics natively inside reports, either as HTML or in the Nano Banana format. This is intended to help developers present complex datasets visually.

Other new capabilities include the option to review and refine the agent’s research plan before execution (Collaborative Planning), multimodal input from PDFs, CSVs, images, audio, and video, as well as real-time streaming of intermediate steps. Developers can also disable web access entirely and restrict the agent to research based only on proprietary data.

According to Google, both agents run on the same infrastructure that powers research features in end-user products such as the Gemini app, NotebookLM, Google Search, and Google Finance. Developers can start building their own research workflows through the Interactions API. Google also says both agents will soon become available to startups and enterprises through Google Cloud.

Google is pushing Gemini further into the agentic research market with a clear split between low-latency and high-thoroughness workflows. The most important upgrade is not just stronger reasoning, but the combination of open-web research, proprietary data access, and native report generation, which makes these agents more practical for real enterprise use.

ES

ES  EN

EN