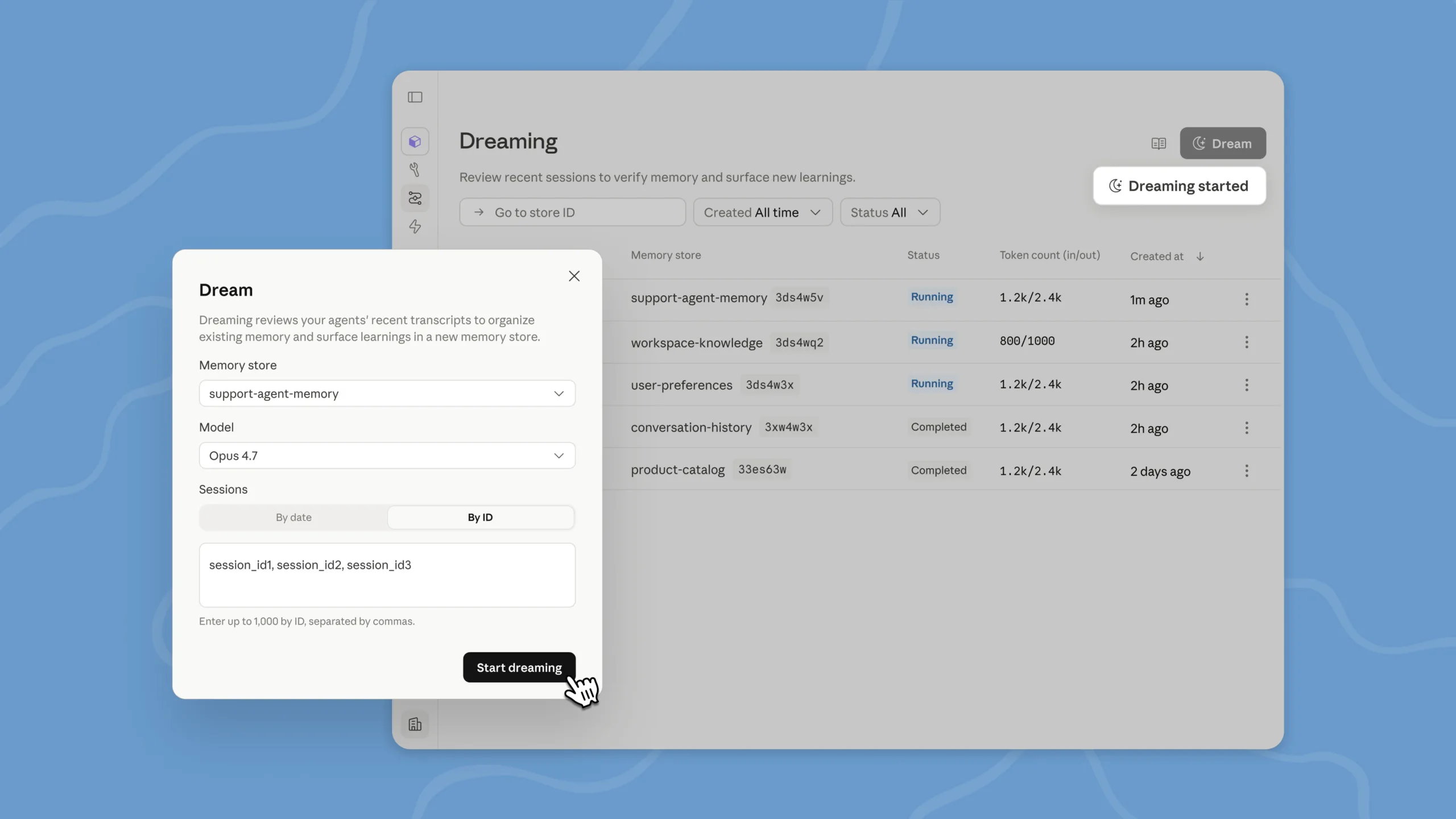

The first new feature is Dreaming — a scheduled process that reviews previous agent sessions, identifies patterns, and carries useful lessons across sessions. These can include recurring errors, successful workflows, or instructions that should be preserved for future tasks.

Technically, Dreaming runs as an asynchronous job. It reads an existing Memory Store and, optionally, up to 100 past sessions, removes duplicate or outdated entries, and produces a new organized Memory Store. The original store remains unchanged. The feature currently supports Claude Opus 4.7 and Claude Sonnet 4.6, with billing based on standard API token pricing.

Outcomes: A Separate Grader Checks Results Against Fixed Criteria

Outcomes and Multiagent Orchestration are moving from research preview to public beta. With Outcomes, developers define a rubric — a document containing specific success criteria, such as “the CSV file includes a price column with numeric values.”

A separate evaluator, called a Grader, then checks the agent’s output in its own context window against those criteria. Because the Grader does not see the agent’s internal reasoning, it is less likely to be influenced by how the agent arrived at its answer.

If the result does not meet the rubric, the Grader identifies the gaps and the agent revises its work. By default, this loop runs up to three times, with a maximum of 20 attempts.

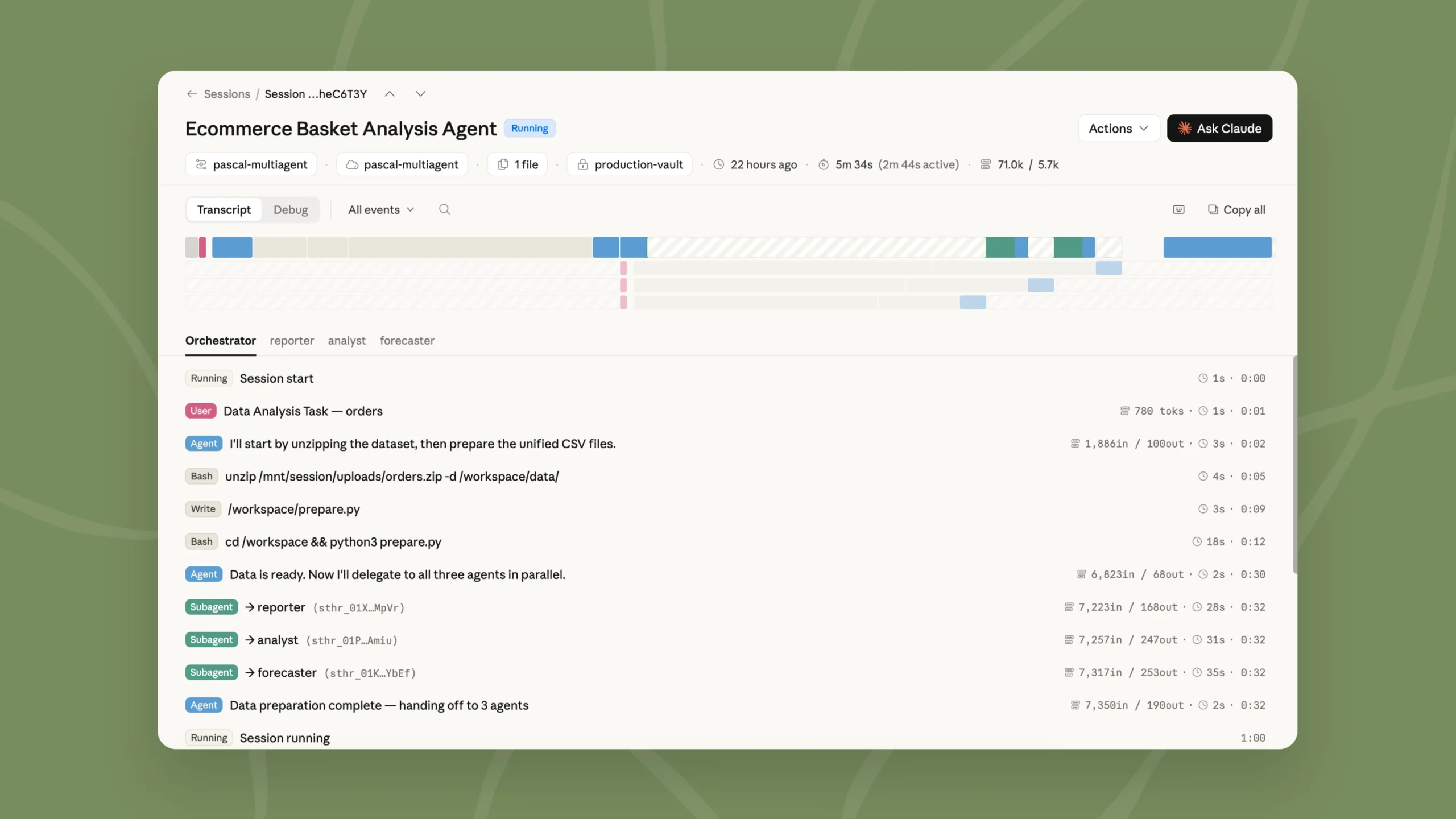

Multiagent Orchestration: A Coordinator Delegates Work to Specialists

With Multiagent Orchestration, a lead agent, or Coordinator, manages several specialized agents. Each agent runs in its own thread with isolated context, its own model, system prompt, and tools. All agents, however, share the same file system.

The Coordinator can distribute tasks in parallel — for example, assigning code review and test generation to different agents at the same time. Up to 20 different agents can be configured, with a maximum of 25 threads running simultaneously.

Dreaming is currently available as a research preview, with access available through a request form on Claude’s website. Outcomes, Multiagent Orchestration, and Memory are available in public beta as part of Managed Agents. Further details are available in Anthropic’s documentation, Claude blog, and Claude Console.

Readers can also compare Claude with other leading tools in the AIWireMedia ranking of the best AI platforms, which helps users choose the right service based on capabilities, pricing, privacy, integrations, and user feedback.

This article best fits the Platforms section because it focuses on Anthropic’s agent infrastructure, developer tools, memory systems, result evaluation, and multiagent orchestration inside Claude Managed Agents.

ES

ES  EN

EN