The virtual assistants are powered by Codex and are designed to handle tasks that employees already perform today, including preparing reports, writing code, and responding to messages.

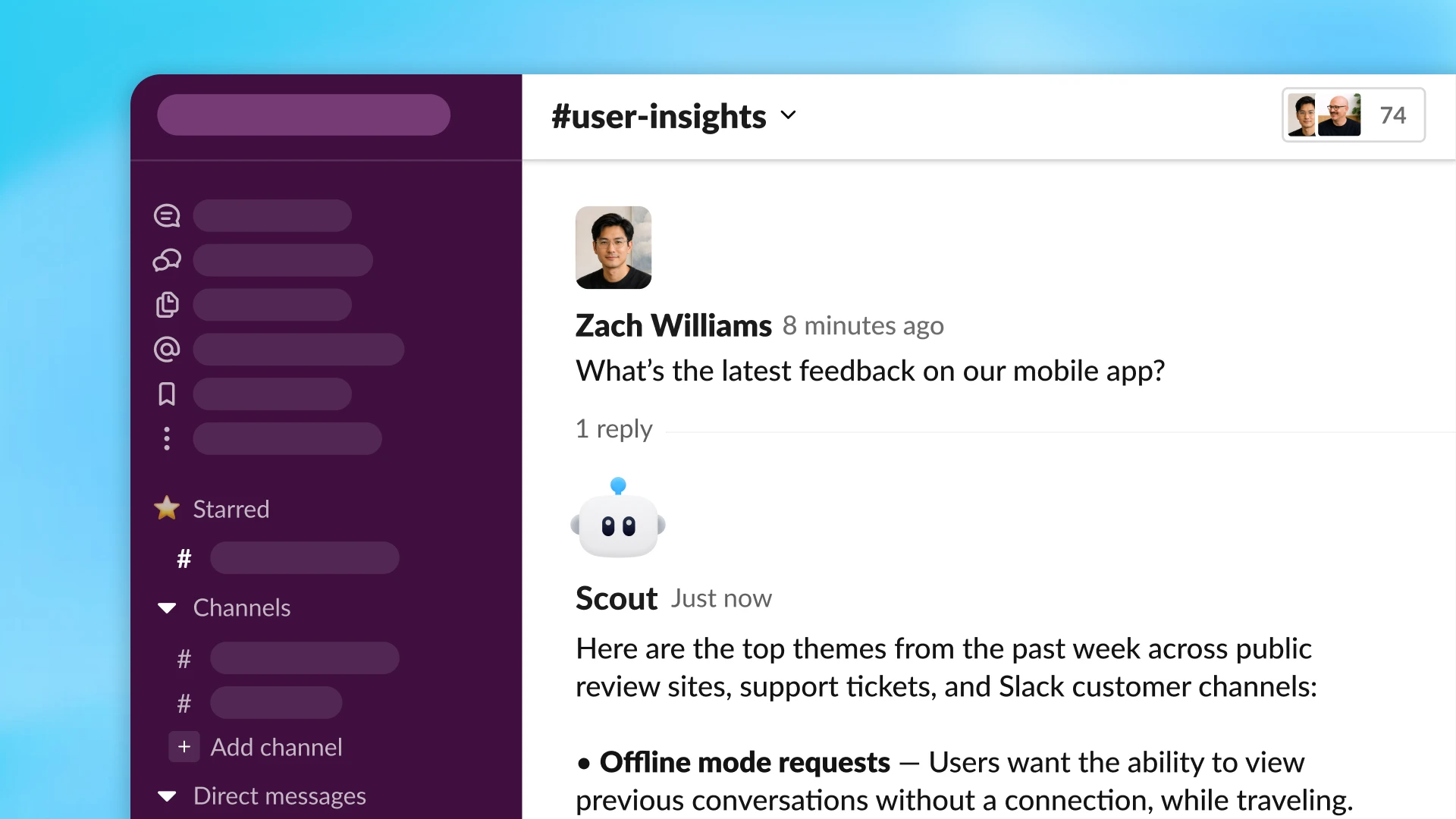

Because the agents run in the cloud, they can continue working on assigned tasks even when the human user is away. They are built for collaborative use inside organizations: a team can create an assistant once and then use it together in ChatGPT or Slack.

“AI has already helped people become more productive in individual work. But many important company workflows depend on shared context, handoffs between employees, and cross-team decisions. Workspace agents are designed for this type of work: they can gather context from the right systems, follow team processes, request approval when needed, and coordinate work across different tools,” OpenAI said in its announcement.

As an example, OpenAI described an agent used by a sales team. The assistant can combine information from call notes and customer research, qualify new leads, and prepare draft follow-up emails for a sales manager.

“This means less time spent stitching details together and more time spent talking to customers,” the company noted.

Workspace agents are available through a research preview for ChatGPT Business, Enterprise, and Edu plans, as well as ChatGPT for Teachers for K–12 education.

OpenAI Adds a Privacy Protection Tool

OpenAI also released a free tool called Privacy Filter, designed to remove sensitive information from messages before they are seen by ChatGPT. This can include tax documents, medical records, work emails containing client names, and API keys.

Privacy Filter is available on Hugging Face and GitHub. The model has 1.5 billion parameters, and anyone can download, use, or modify the software.

When a user enters text into a chatbot conversation, the tool automatically replaces sensitive details with generic placeholders such as [PRIVATE_PERSON] or [ACCOUNT_NUMBER].

The filter scans text for eight categories of personal information: names, addresses, email addresses, phone numbers, URLs, dates, account numbers, and secret credentials such as passwords and API keys.

According to OpenAI, the model is highly effective at detecting sensitive information. It scored 96% in a standard evaluation using the PII-Masking-300k dataset.

ES

ES  EN

EN