The latest generation in Alibaba’s Qwen family comes in three Instruct variants — Plus, Flash, and Light — supports contexts of up to 256,000 tokens, and, according to the Qwen team, can process more than ten hours of audio and over 400 seconds of 720p video at one frame per second. The model was pretrained natively in an omnimodal way on more than 100 million hours of audiovisual material. In addition to text, it can also generate speech.

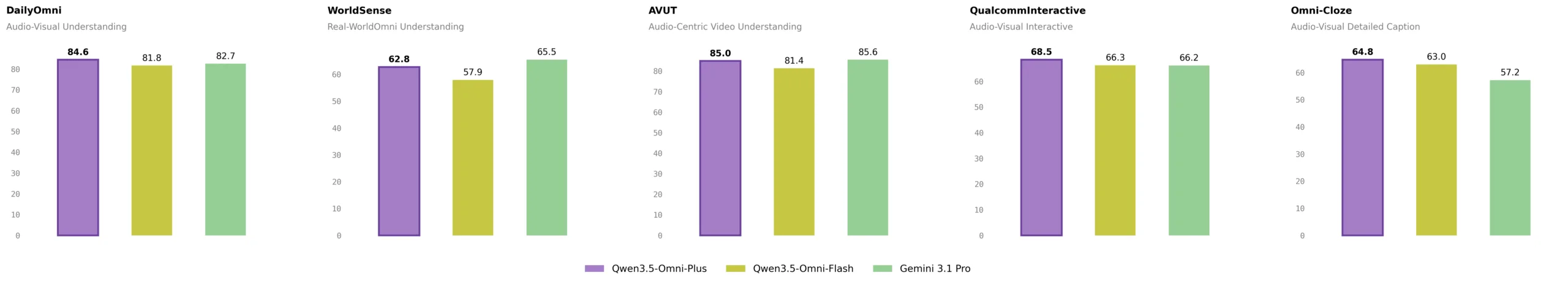

215 benchmarks: Qwen3.5-Omni-Plus is said to beat Gemini 3.1 Pro in audio

According to the Qwen team, the Plus variant sets a new state of the art across 215 audio and audiovisual subtasks. These include three audiovisual benchmarks, five audio benchmarks, eight speech recognition benchmarks, 156 language-specific translation tasks, and 43 language-specific recognition tasks.

Qwen3.5-Omni-Plus is said to outperform Google’s Gemini 3.1 Pro in general audio understanding, reasoning, recognition, translation, and dialogue. In overall audiovisual understanding, the model is said to be on par with Gemini 3.1 Pro.

In the published results, Qwen3.5-Omni-Plus scores 82.2 points on audio understanding (MMAU), versus 81.1 for Gemini 3.1 Pro. In music understanding (RUL-MuchoMusic), the gap is wider: 72.4 versus 59.6. In the VoiceBench spoken dialogue benchmark, the model scores 93.1 versus Gemini’s 88.9. Its visual and text capabilities are said to match those of the pure Qwen3.5 text models of similar size.

For speech generation, the Qwen team compares the model with ElevenLabs, Gemini 2.5 Pro, GPT-Audio, and Minimax. On the demanding “Seed-hard” test set, Qwen3.5-Omni-Plus achieves a word error rate of 6.24. GPT-Audio scores 8.19, Minimax 8.62, and ElevenLabs 27.70. In voice cloning across 20 languages, the model reaches a word error rate of 1.87 and a cosine similarity score of 0.79.

From 11 to 74 languages: tenfold expansion in speech recognition

Compared with its predecessor Qwen3-Omni, the Qwen team has massively expanded language support. Speech recognition now covers 74 languages and 39 Chinese dialects, for a total of 113 languages and dialects. The predecessor supported 11 languages and 8 Chinese dialects.

Speech output now supports 36 languages and dialects. A total of 55 different voices are available, including custom, scenario-specific, dialectal, and multilingual options.

On the Fleurs dataset for the top 60 languages, Qwen3.5-Omni-Plus achieves a word error rate of 6.55, compared with 7.32 for Gemini 3.1 Pro. In Chinese variants such as Cantonese, the lead is much larger: 1.95 versus 13.40. The context window has also expanded significantly, from 32,000 to 256,000 tokens.

ARIA aims to solve a well-known speech output problem

The architecture still follows the Thinker-Talker principle. The Thinker analyzes omnimodal inputs and generates text, while the Talker converts that into context-aware speech. Both components now use a Hybrid-Attention MoE architecture instead of the pure Mixture-of-Experts design used in the predecessor.

The main technical innovation is called ARIA, short for Adaptive Rate Interleave Alignment. This method dynamically aligns text and speech tokens and interleaves them. The Qwen team says it is designed to solve a common issue in real-time speech generation: because text and speech tokens are encoded with different efficiency, streaming conversations often suffer from omissions, slips, or poorly pronounced numbers.

ARIA is intended to make speech synthesis more natural and robust without sacrificing real-time performance. The predecessor still relied on a rigid 1:1 mapping between text and audio tokens.

Coding from video and voice emerges as a new capability

According to the Qwen team, scaling omnimodal training produced an unexpected capability. The model can write code directly from spoken instructions and video content. The team calls this “Audio-Visual Vibe Coding.”

In published demos, Qwen3.5-Omni-Plus generates a working Snake game from a spoken description and a video clip. The team says this ability was not explicitly trained, but emerged from native multimodal scaling.

The model can also describe audio and video content in such detail that the outputs resemble scripts. It automatically segments content, adds second-level timestamps, and provides fine-grained details on characters, dialogue, sound effects, and how they interact.

In one demo, the model breaks down a three-minute lion documentary scene by scene, identifying every speaker, cut, and sound. Another demo shows how it detects violent scenes in video games for content moderation and lists them in a table with timestamps and risk levels.

Real-time interaction with smart interruption and web search

For real-time conversations, Qwen3.5-Omni includes several features that were missing from its predecessor. Its “semantic interruption” capability detects whether a user actually intends to speak and ignores background noise or short interjections.

The model can independently decide whether to launch a web search to answer current questions, and it supports complex function calling. Users can also adjust the model’s speaking style with voice commands. Volume, speed, and emotion can be controlled during the conversation. Through voice cloning, users can upload their own voice and use it as the AI assistant’s voice.

According to the Qwen team, all of these features are available through the Realtime API. The model is also accessible via Qwen Chat and Alibaba Cloud Model Studio.

Unlike earlier Qwen releases such as Qwen3-Omni and the Qwen3.5 text models, Alibaba has not published model weights or named a license. For now, Qwen3.5-Omni is available only as an API service.

Qwen3.5-Omni arrives amid team turbulence and a model offensive

Qwen3.5-Omni is part of a fast-paced release cycle. As recently as April 2025, Alibaba introduced its predecessor Qwen3-Omni. That 30-billion-parameter model achieved top results on 32 of 36 audio and video benchmarks, according to Alibaba, and responded to pure audio inputs in 211 milliseconds.

At the same time, Alibaba expanded the Qwen-3.5 text model family to four models. Its flagship, Qwen3.5-397B-A17B, uses a Mixture-of-Experts architecture with 397 billion total parameters and 17 billion active parameters.

However, the release comes during a turbulent phase. Junyang Lin, Alibaba’s chief AI developer and the leading figure behind the entire Qwen model family, recently announced his resignation unexpectedly. Other key members of the team followed, including leaders responsible for Qwen-Coder, post-training, and Qwen 3.5/VL.

The reported trigger was an internal restructuring in which a researcher poached from Google’s Gemini team was supposed to take over leadership. Alibaba CEO Eddie Wu then announced a new “Foundation Model Task Force” and emphasized that advancing foundation models remains a “core strategic priority.”

ES

ES  EN

EN